Racially-motivated discrimination against Asian people has surged since the early outbreaks of the COVID-19 pandemic in 2020. Many incidents where people of ‘Asian’ appearance were subjected to verbal and even physical abuse have been reported in Australia and other Western countries, such as the US. Studies in the UK have established an intrinsic link between the fear of the virus and frustration related to COVID-related public health restrictions; and anti-Asian sentiment and hatred. While the magnitude and frequency of these incidents might have gradually diminished over time, the online space continues to be a hostile environment that mirrors and shapes Asian Australians’ frustration and fears of racism during a time of significant uncertainty.

Through a national survey conducted between 28 April 2021 and 7 June 2021, we explored the experiences of 413 young Asian Australians with anti-Asian racism on social media and their strategies to cope with the abusive and harmful content. We found that young Asian Australians were conscious of the anti-Asian sentiments, and that they have learned to utilise existing platform functions and mechanisms to protect themselves and the wider community.

The findings lead us to reflect on some practical actions that social media platforms should and can take to address the issue of online racism and to forge a safe, inclusive and resilient environment.

The study

We surveyed 413 young adults (16-30 years old) who self-identified as ‘Asian’ or ‘Asian Australian’ living in Australia during the COVID-19 pandemic. The survey attracted a balanced sample of female (51.1 percent) and male respondents (48.8 percent). Six in ten respondents (58.8 percent) were international students. Just under 10 percent (9.7 percent) of respondents were 16-17 years old who completed the survey with guardians’ consent and supervision; 47.5 percent of respondents were aged 18-24 years old, and 42.8 percent were aged 25-30 years old. Most of our respondents resided in Australia’s most populous state, New South Wales (42.5 percent) and its second most populous state, Victoria (25.4 percent). Most respondents had obtained a Bachelor degree (37 percent) or above (23 percent). While English was the primary language spoken among our respondents (36.8 percent), the Nepali-speaking respondents stood out as an emerging language group in the Asian Australian population (22.7 percent). This observation consists with a surge in Nepalese immigrants to Australia since 2009, most of whom reside in New South Wales. The other main language groups in this survey were Mandarin (10.9 percent) and Vietnamese (10.7 percent).

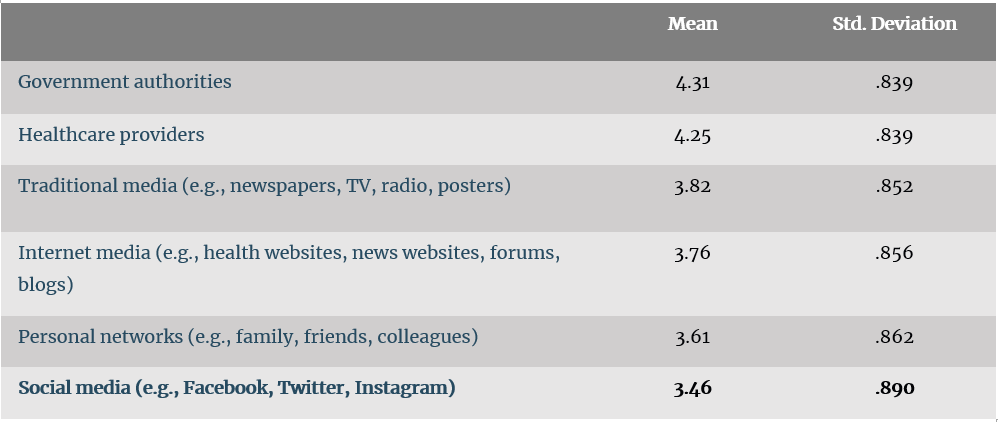

Although social media was the most used means to obtain COVID-19 information ((Mean (M) = 4.09 out of 5-point frequency scales, Standard Deviation (SD) = 0.94)), young Asian Australians also trusted social media the least (see Table 1).

Table 1 – Young Asian Australians’ trust in COVID-19 information sources

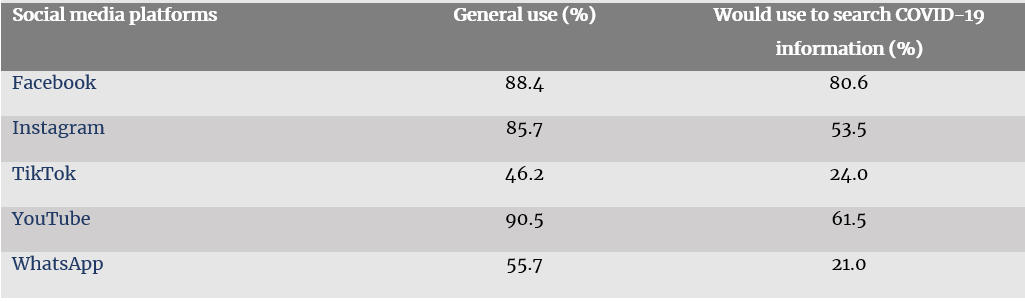

This paradox shows that young Asian Australians approach social media with caution and critical perspectives in relation to COVID-19-related content. The paradox also explains the obvious drop in their social media use for COVID-19-related news and information compared to general use (Table 2).

Table 2 – Top five social media platforms used among young Asian Australians

How did young Asian Australians cope with COVID-related racism on social media?

Our survey asked respondents how often they had been exposed to anti-Asian content in relation to COVID-19 on social media since the outbreak of the pandemic. 13.6 percent said ‘never’, 29.5 percent ‘rarely’, 36.3 percent ‘sometimes’, 15.5 percent ‘often’, and 5.1 percent ‘all the time’. Many of our survey respondents did not experience racism directly towards themselves (M = 1.96, SD = 1.18, on 5-point Likert scales) but felt an overall rise of racism. We invited respondents to elaborate on their social media experience with racism and anti-racism, several respondents referred to anti-Asian incidents in the U.S., such as this one:

‘Videos […] (about Asian hate and racist incidents) spreading across social media should serve as raising social awareness of reducing incidents like this from happening.’

Another comment also highlights the ‘indirectness’ of their experience:

‘I read news and articles as well as watch anti-Asian racism and hate crimes and it does not feel good. The hatred makes me sick.’

For those who reported they had been exposed to some anti-Asian content on social media (n = 357), we further asked how they had responded to such content (‘choose all that apply’).

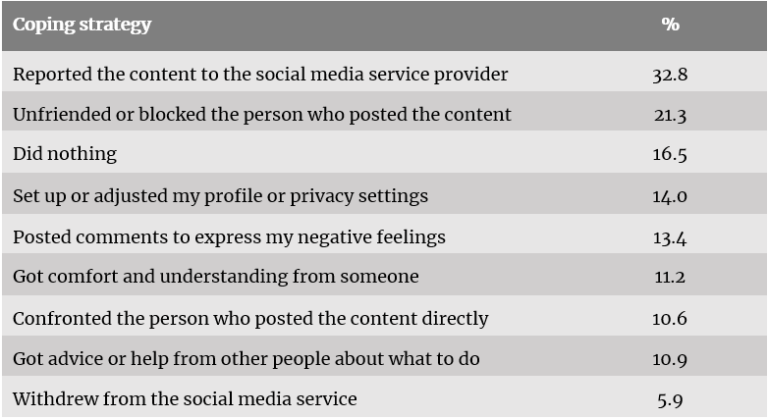

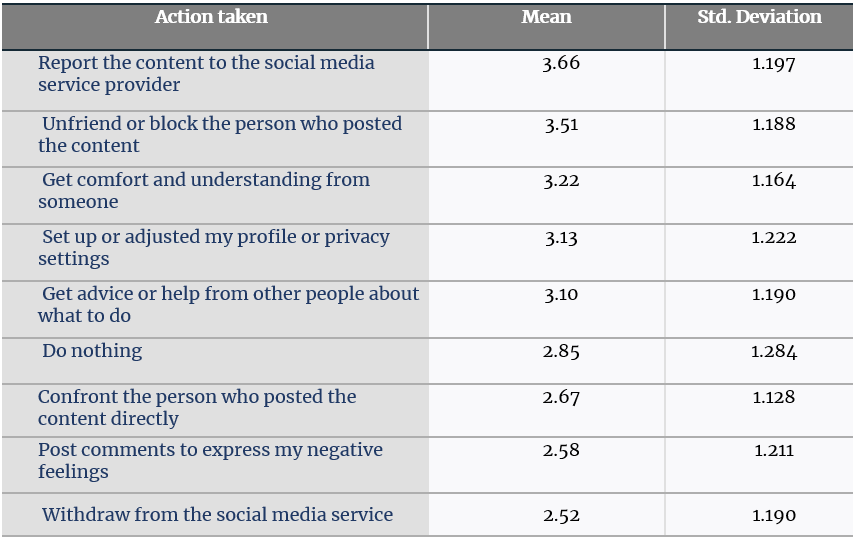

Table 3 displays the coping strategies adopted by the respondents.

Table 3 – Social media coping strategies

The top two coping strategies—reporting the content to the social media service provider (32.8 percent) and ‘unfriending’ or blocking (21.3 percent)— are direct actions that utilise existing functions on social media. This suggests that some young Asian Australians have taken action to protect themselves from online racism by navigating different technological options of the social media used.

However, the third most frequently adopted strategy is ‘nothing’ (16.5 percent ), which implies many young Asian Australians felt less empowered or confident in dealing with racism on social media. Further, young Asian Australians were even less likely to confront the person who posted the content (10.6 percent) or directly comment to express their negative feelings (13.4 percent).

Resilience

Public conversations relating to racism and hate crime in Australia and elsewhere have rightly focused on governments’ responses, civic awareness and the responsibilities of bystanders, as well as a general reflection on the issues related to multiculturalism. A Facebook whistle-blower’s recent claim that Facebook knew about its platform’s role in creating social stigmatisation and harm against young people has heightened the need to hold social media companies accountable for the protection, safety, and wellbeing of their users. Yet, our survey respondents have also found opportunities to counter abusive and hateful content by utilising existing mechanisms on the respective social media platforms.

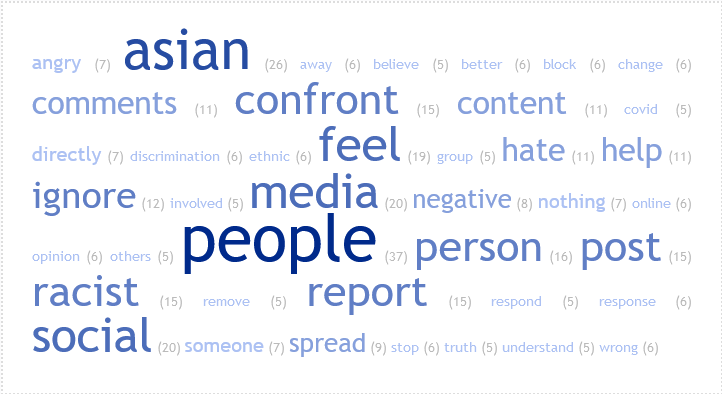

The survey invited respondents who said they had taken action in response to anti-Asian content (Table 3) to elaborate on their reasons for their action, and 109 provided further comments. Figure 1 displays the top 50 frequent words which emerged from the responses to this question (minimum frequency: 5). It is clear that respondents felt strongly about their Asian identity and there was a mix of emotions underlining some of the respondents’ actions: angry, (feeling) better and negativ(ity).

Figure 1 – Frequent words used by those who said they had taken action in response to anti-Asian content

Generated by TagCrowd

Survey respondents also indicated their intention to become more involved in addressing racism and actively countering it (‘comments’, ‘confront’, ‘directly’, ‘report’ and ‘spread’) rather than passively waiting for third-party regulators to step in. There was a clear sense of advocacy: (to) ‘confront’, ‘help’, ‘change’, ‘respond’/’response’, and ‘stop’.

The proactiveness was confirmed when we asked what they would do if they encountered racist content (‘choose all that apply’) (Table 4).

Table 4 – Intended social media copying strategy

As the top responses to the question highlighted in Table 4 exhibit, young Asian Australians showed resilience in their expressed intention to take a proactive approach by reporting racist content and/or blocking the person posting such content instead of passively avoiding the issue. They also expressed desire for mutual support networks (‘get comfort and understanding from someone’) during a difficult time.

Social media platforms as intermediaries and actors

We focus here on Facebook, Instagram and YouTube as they were most used social media platforms among our respondents. Conceptually, our survey’s findings suggest the opportunity to build a collaborative approach between platforms, users and other stakeholders to address the issue of online racism and discrimination in general. Previous scholarly knowledge on this topic tends to view social media platforms as intermediaries that can connect social actors to work collaboratively. A telling example is Facebook’s use in classroom learning and education.

However, we go further by proposing that social platforms are one of the actors who can work with other actors such as users and regulators to mitigate online hate and harm and build younger users’ resilience.

We recognise social media spaces are highly volatile and dynamic, that content is created and distributed in real-time. However, we can harness social media companies’ power to develop effective governance through their affordances of connecting individuals, scaling up private experience to matters of public interest, intermediating multiple but interconnected activities, and mobilising unused resources.

Current debates on social media platform governance mainly centre around the notions of self-regulation by technology companies and social media operators versus external agencies’ oversight and regulation. This dichotomy often misses the opportunity to consider the middle ground, which is a collaborative approach between multiple actors. Based on young Asian Australians’ actions and intentions as highlighted above, and the premise that social media platforms do have duty of care to their users, there are several practical things platforms can do.

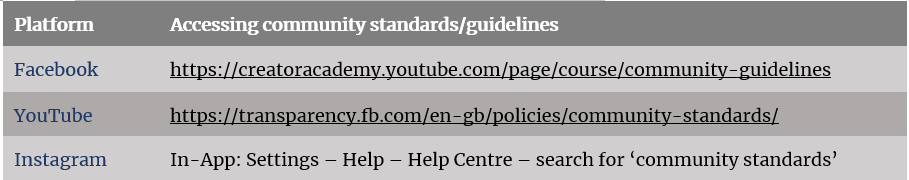

First, platforms should actively communicate their community guidelines to users. The appropriate regulatory framework should require platforms to make their community guidelines (which all mainstream social media services have) easily accessible on their respective landing/homepage. This is not yet the case for the three social media platforms under investigation here.

The URLs of YouTube’s and Facebook’s community guidelines (see Table 5) suggest that this type of information is external to their main websites and apps. Users have to search for this information in order to find it, either through a general online search or in-platform search.

Table 5 – Pathways to accessing community standards/guidelines

Further, none of the three social media platforms make a reasonable attempt to remind users about the expected community standards and guidelines during the process of content creation and publication (original content or comments). We suggest platforms insert a pop-up reminder of their community standards and a link to this information just before users post their content.

The second thing all platforms can do is to proactively support users’ online literacy by running appropriate campaigns to promote a healthy and safe online environment and responsible social media use. Social media should develop and feature promotional materials on their platforms to inform/remind users about the community standards, accessing help, and options to help protect themselves from abusive and harmful content. Our survey findings suggest that while many respondents developed coping strategies (reporting, blocking, and changing account privacy), a sizable number of respondents were uncertain about the available options and steps they could take and felt vulnerable when encountering racist or other harmful content.

We also found that all three platforms failed to make the ‘reporting’ function visible to users as a ‘response’ to the content an (original post or a comment made by another user). None has the ‘flag’ icon, which many email services have, to indicate that users can either report the content or disengage with the content and its creator (by blocking or hiding). We suggest that making a flagging function available is essential to improve users’ awareness, especially younger users’ awareness, of their responsibilities and rights as social media users.

Finally, external regulators such as governments should take a discursive, supervisory approach to:

- working with platform operators to implement the above two actions; and

- promoting digital literacy and training to the public, especially to vulnerable users such as youth from multicultural backgrounds.

Digital literacy education and training could be incorporated into government school curricula and become part of tertiary student induction programs. Instead of directly intervening in everyday social media operations, governments should use a supervision approach to ensure the interaction between platform and users is ethical and meaningful to build community resilience and social cohesion.

Authors: Dr Wilfred Yang Wang, Dr Wonsun Shin and Dr Jay Song.

Acknowledgement: This study was funded by the University of Melbourne Chancellery (COVID-19 Impact on Society Seed Fund).

Image credit: pxhere.